UNSW NLP Group

- language model evaluation

- efficient transformers

- narrative generation

- LLM personalisation

- clinical triage

- legal text

- multimodal systems

- dialects and language varieties

- LGBTQIA+ communities

- speech for second language speakers

Interested in joining? Email us with [Expression of Interest: Type] in the subject, where Type is one of:

Recent News

Far Out — evaluating language models on slang in Australian and Indian English — accepted to VarDial @ EACL 2026. | |

Amrita’s system paper on Arabic medical text classification accepted to AbjadNLP @ EACL 2026. | |

TriageSim — a conversational emergency triage simulation framework from electronic health records — released as a Python package. | |

Charles’s paper on evaluating LLMs for masked sentence prediction accepted to IJCNLP-AACL 2025. | |

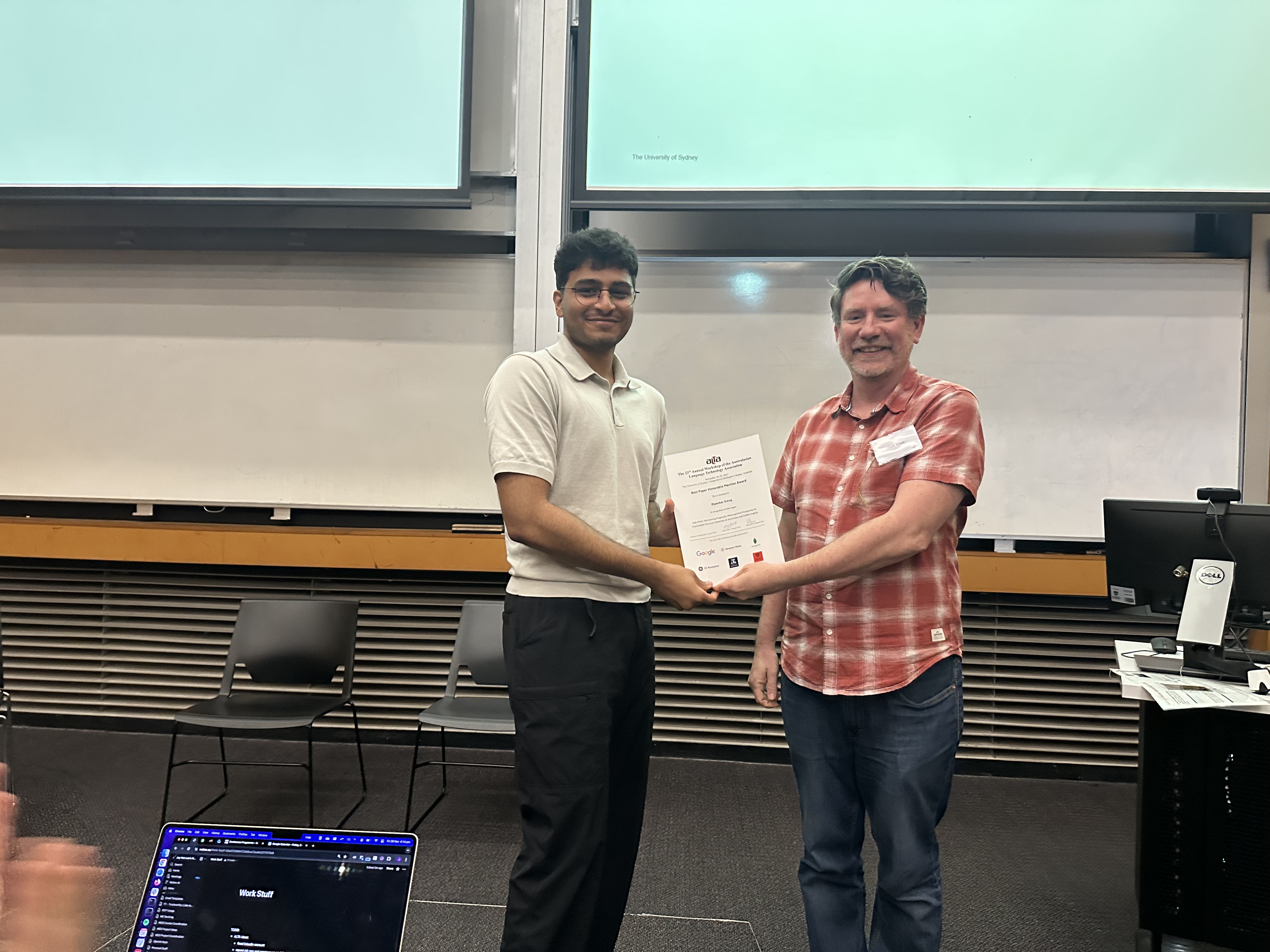

Nek Minit received the Best Paper Honorable Mention at ALTA 2025, co-organised by Aditya. | |

Amrita’s survey on classification tasks for legal contracts accepted to Artificial Intelligence Review. | |

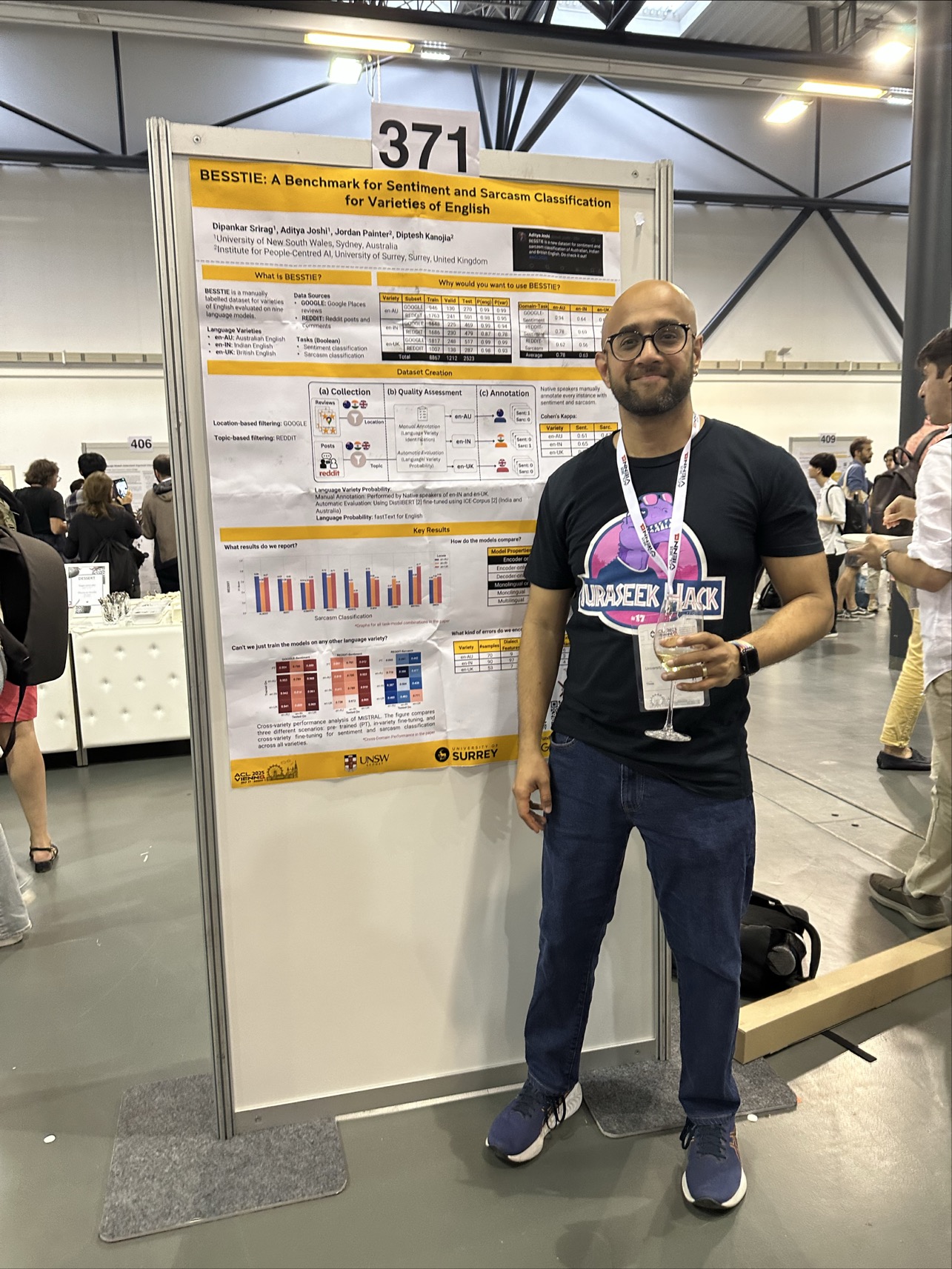

BESSTIE — a benchmark for sentiment and sarcasm across varieties of English — accepted to Findings of ACL 2025. Dataset on HuggingFace. | |

RACCOON — retrieval-augmented geocoding for news articles — accepted to TheWebConf 2025. | |

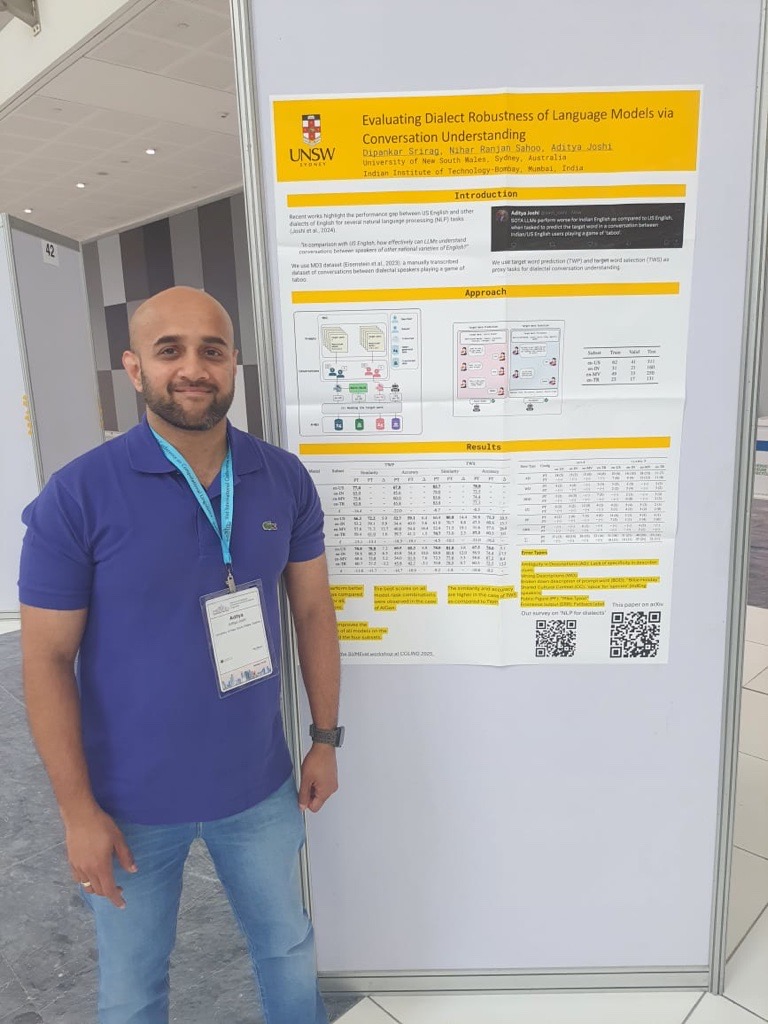

Dipankar presented our work on dialect adaptation for game-playing conversations at NAACL 2025 in Albuquerque. | |

Aditya’s survey on NLP for dialects of a language published in ACM Computing Surveys. |

Selected Publications

BibTeX

@inproceedings{wyatt-etal-2025-missing,

title = "What am {I} missing here?: Evaluating Large Language Models for Masked Sentence Prediction",

author = "Wyatt, Charlie and

Joshi, Aditya and

Salim, Flora D.",

editor = "Inui, Kentaro and

Sakti, Sakriani and

Wang, Haofen and

Wong, Derek F. and

Bhattacharyya, Pushpak and

Banerjee, Biplab and

Ekbal, Asif and

Chakraborty, Tanmoy and

Singh, Dhirendra Pratap",

booktitle = "Proceedings of the 14th International Joint Conference on Natural Language Processing and the 4th Conference of the Asia-Pacific Chapter of the Association for Computational Linguistics",

month = dec,

year = "2025",

address = "Mumbai, India",

publisher = "The Asian Federation of Natural Language Processing and The Association for Computational Linguistics",

url = "https://aclanthology.org/2025.ijcnlp-short.24/",

doi = "10.18653/v1/2025.ijcnlp-short.24",

pages = "273--283",

ISBN = "979-8-89176-299-2",

abstract = "Transformer-based models primarily rely on Next Token Prediction (NTP), which predicts the next token in a sequence based on the preceding context. However, NTP{'}s focus on single-token prediction often limits a model{'}s ability to plan ahead or maintain long-range coherence, raising questions about how well LLMs can predict longer contexts, such as full sentences within structured documents. While NTP encourages local fluency, it provides no explicit incentive to ensure global coherence across sentence boundaries{---}an essential skill for reconstructive or discursive tasks. To investigate this, we evaluate three commercial LLMs (GPT-4o, Claude 3.5 Sonnet, and Gemini 2.0 Flash) on Masked Sentence Prediction (MSP) {---} the task of infilling a randomly removed sentence {---} from three domains: ROCStories (narrative), Recipe1M (procedural), and Wikipedia (expository). We assess both fidelity (similarity to the original sentence) and cohesiveness (fit within the surrounding context). Our key finding reveals that commercial LLMs, despite their superlative performance in other tasks, are poor at predicting masked sentences in low-structured domains, highlighting a gap in current model capabilities."

}

Copied!BibTeX

@inproceedings{singh-etal-2025-nek,

title = "{N}ek Minit: Harnessing Pragmatic Metacognitive Prompting for Explainable Sarcasm Detection of {A}ustralian and {I}ndian {E}nglish",

author = "Singh, Ishmanbir and

Srirag, Dipankar and

Joshi, Aditya",

editor = "Kummerfeld, Jonathan K. and

Joshi, Aditya and

Dras, Mark",

booktitle = "Proceedings of the 23rd Annual Workshop of the Australasian Language Technology Association",

month = nov,

year = "2025",

address = "Sydney, Australia",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2025.alta-main.2/",

pages = "13--27",

ISBN = "1834-7037",

abstract = "Sarcasm is a challenge to sentiment analysis because of the incongruity between stated and implied sentiment. The challenge is exacerbated when the implication may be relevant to a specific country or geographical region. Pragmatic metacognitive prompting (PMP) is a cognition-inspired technique that has been used for pragmatic reasoning. In this paper, we harness PMP for explainable sarcasm detection for Australian and Indian English, alongside a benchmark dataset for standard English. We manually add sarcasm explanations to an existing sarcasm-labeled dataset for Australian and Indian English called BESSTIE, and compare the performance for explainable sarcasm detection for them with FLUTE, a standard English dataset containing sarcasm explanations. Our approach utilising PMP when evaluated on two open-weight LLMs (GEMMA and LLAMA) achieves statistically significant performance improvement across all tasks and datasets when compared with four alternative prompting strategies. We also find that alternative techniques such as agentic prompting mitigate context-related failures by enabling external knowledge retrieval. The focused contribution of our work is utilising PMP in generating sarcasm explanations for varieties of English."

}

Copied!BibTeX

@article{Singh2025,

title = {A survey of classification tasks and approaches for legal contracts},

volume = {58},

ISSN = {1573-7462},

url = {http://dx.doi.org/10.1007/s10462-025-11359-8},

DOI = {10.1007/s10462-025-11359-8},

number = {12},

journal = {Artificial Intelligence Review},

publisher = {Springer Science and Business Media LLC},

author = {Singh, Amrita and Joshi, Aditya and Jiang, Jiaojiao and Paik, Hye-young},

year = {2025},

month = oct

}

Copied!BibTeX

@inproceedings{srirag-etal-2025-besstie,

title = "{BESSTIE}: A Benchmark for Sentiment and Sarcasm Classification for Varieties of {E}nglish",

author = "Srirag, Dipankar and

Joshi, Aditya and

Painter, Jordan and

Kanojia, Diptesh",

editor = "Che, Wanxiang and

Nabende, Joyce and

Shutova, Ekaterina and

Pilehvar, Mohammad Taher",

booktitle = "Findings of the Association for Computational Linguistics: ACL 2025",

month = jul,

year = "2025",

address = "Vienna, Austria",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2025.findings-acl.441/",

doi = "10.18653/v1/2025.findings-acl.441",

pages = "8413--8429",

ISBN = "979-8-89176-256-5",

abstract = "Despite large language models (LLMs) being known to exhibit bias against non-mainstream varieties, there are no known labeled datasets for sentiment analysis of English. To address this gap, we introduce BESSTIE, a benchmark for sentiment and sarcasm classification for three varieties of English: Australian (en-AU), Indian (en-IN), and British (en-UK). Using web-based content from two domains, namely, Google Place reviews and Reddit comments, we collect datasets for these language varieties using two methods: location-based and topic-based filtering. Native speakers of the language varieties manually annotate the datasets with sentiment and sarcasm labels. To assess whether the dataset accurately represents these varieties, we conduct two validation steps: (a) manual annotation of language varieties and (b) automatic language variety prediction. We perform an additional annotation exercise to validate the reliance of the annotated labels. Subsequently, we fine-tune nine large language models (LLMs) (representing a range of encoder/decoder and mono/multilingual models) on these datasets, and evaluate their performance on the two tasks. Our results reveal that the models consistently perform better on inner-circle varieties (i.e., en-AU and en-UK), with significant performance drops for en-IN, particularly in sarcasm detection. We also report challenges in cross-variety generalisation, highlighting the need for language variety-specific datasets such as ours. BESSTIE promises to be a useful evaluative benchmark for future research in equitable LLMs, specifically in terms of language varieties. The BESSTIE dataset is publicly available at: \url{https://huggingface.co/datasets/unswnlporg/BESSTIE}."

}

Copied!BibTeX

@inproceedings{10.1145/3701716.3715501,

author = {Lin, Jonathan and Joshi, Aditya and Paik, Hye-young and Doung, Tri Dung and Gurdasani, Deepti},

title = {RACCOON: A Retrieval-Augmented Generation Approach for Location Coordinate Capture from News Articles},

year = {2025},

isbn = {9798400713316},

publisher = {Association for Computing Machinery},

address = {New York, NY, USA},

url = {https://doi.org/10.1145/3701716.3715501},

doi = {10.1145/3701716.3715501},

abstract = {Geocoding involves automatic extraction of location coordinates of incidents reported in news articles, and can be used for epidemic intelligence or disaster management. This paper introduces Retrieval-Augmented Coordinate Capture Of Online News articles (RACCOON), an open-source geocoding approach that extracts geolocations from news articles. RACCOON uses a retrieval-augmented generation (RAG) approach where candidate locations and associated information are retrieved in the form of context from a location database, and a prompt containing the retrieved context, location mentions and news articles is fed to an LLM to generate the location coordinates. Our evaluation on three datasets, two underlying LLMs, three baselines and several ablation tests based on the components of RACCOON demonstrate the utility of RACCOON. To the best of our knowledge, RACCOON is the first RAG-based approach for geocoding using pre-trained LLMs.},

booktitle = {Companion Proceedings of the ACM on Web Conference 2025},

pages = {1123–1127},

numpages = {5},

keywords = {geocoding, large language models, location extraction, news articles, rag, retrieval-augmented generation},

location = {Sydney NSW, Australia},

series = {WWW '25}

}

Copied!